publications

publications by categories in reversed chronological order. generated by jekyll-scholar.

2026

-

Pareto-Conditioned Diffusion Models for Offline Multi-Objective OptimizationJatan Shrestha*, Santeri Heiskanen*, Kari Hepola, and 3 more authorsIn The Fourteenth International Conference on Learning Representations, 2026

Pareto-Conditioned Diffusion Models for Offline Multi-Objective OptimizationJatan Shrestha*, Santeri Heiskanen*, Kari Hepola, and 3 more authorsIn The Fourteenth International Conference on Learning Representations, 2026This work was accepted as an oral presentation

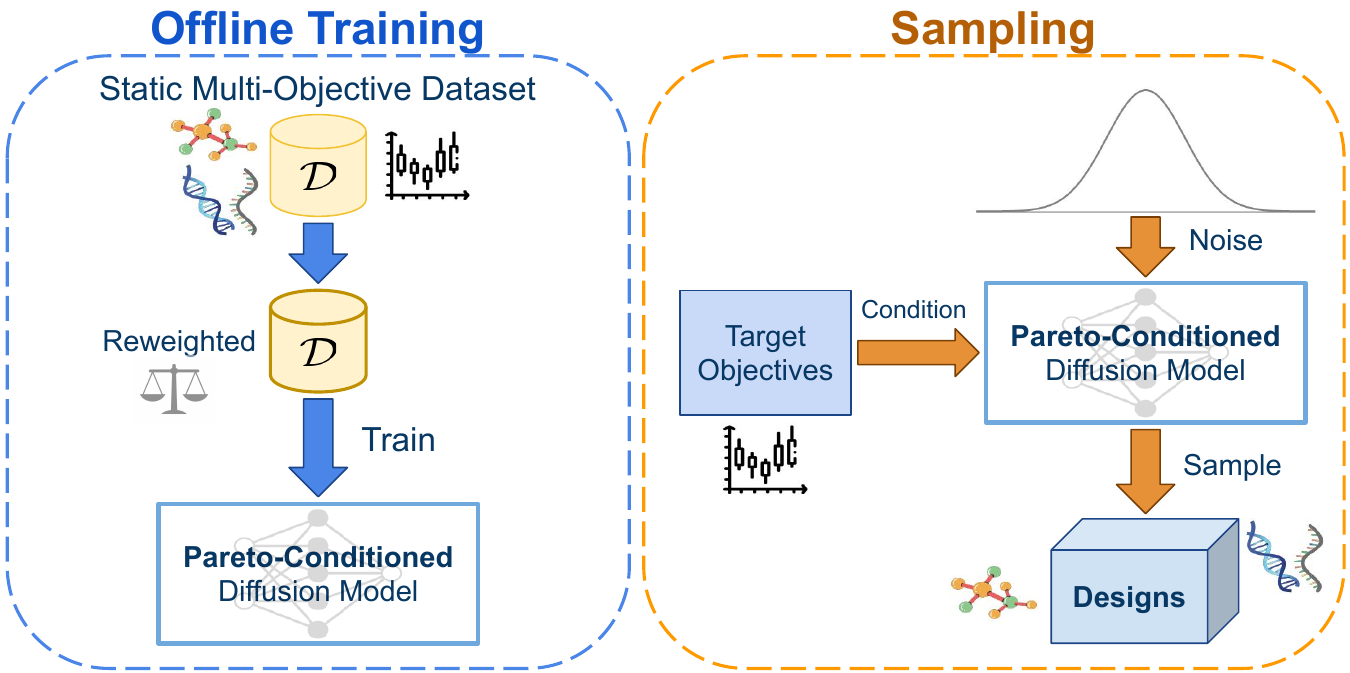

Multi-objective optimization (MOO) arises in many real-world applications where trade-offs between competing objectives must be carefully balanced. In the offline setting, where only a static dataset is available, the main challenge is generalizing beyond observed data. We introduce Pareto-Conditioned Diffusion (PCD), a novel framework that formulates offline MOO as a conditional sampling problem. By conditioning directly on desired trade-offs, PCD avoids the need for explicit surrogate models. To effectively explore the Pareto front, PCD employs a reweighting strategy that focuses on high-performing samples and a reference-direction mechanism to guide sampling towards novel, promising regions beyond the training data. Experiments on standard offline MOO benchmarks show that PCD achieves highly competitive performance and, importantly, demonstrates greater consistency across diverse tasks than existing offline MOO approaches

@inproceedings{shrestha2026paretoconditioned, title = {Pareto-Conditioned Diffusion Models for Offline Multi-Objective Optimization}, author = {Shrestha, Jatan and Heiskanen, Santeri and Hepola, Kari and Rissanen, Severi and Jääskeläinen, Pekka and Pajarinen, Joni}, booktitle = {The Fourteenth International Conference on Learning Representations}, year = {2026}, } -

Network Modernization Improves Multi-Objective Reinforcement LearningAdam Štafa, Santeri Heiskanen, Petr Novotný, and 1 more authorIn Reinforcement Learning Conference, 2026

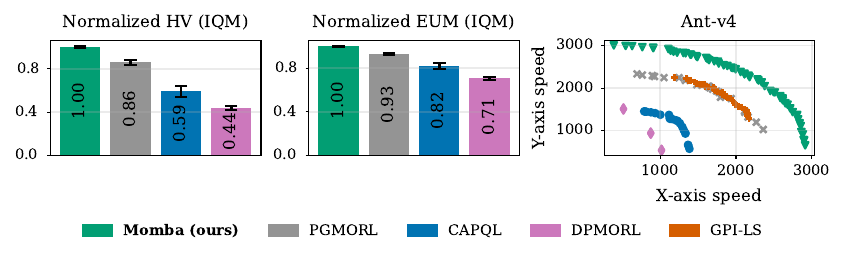

Network Modernization Improves Multi-Objective Reinforcement LearningAdam Štafa, Santeri Heiskanen, Petr Novotný, and 1 more authorIn Reinforcement Learning Conference, 2026Recent advances in deep reinforcement learning (RL) have shown that improving neural network architectures can yield substantial gains in sample efficiency and asymptotic performance without altering the underlying algorithms. In contrast, work on multi-objective reinforcement learning (MORL), which aims to discover a set of policies that balance trade-offs among conflicting objectives, has predominantly focused on algorithmic innovations, leaving the area of architectures underexplored. While the optimal policies and value functions can differ significantly depending on the trade-offs, MORL algorithms commonly represent them with simple feedforward networks conditioned on the trade-off. This raises the question of whether the performance of the algorithms could be improved with more expressive function approximators. In this paper, we integrate recent advances in neural network design: (i) observation and feature normalization, (ii) weight normalization, and (iii) modeling of distributional returns with an entropy-regularized MORL algorithm. The empirical results across standard continuous control benchmarks demonstrate that these changes substantially improve the quality of the produced solution sets without requiring major changes to the underlying algorithm.

@inproceedings{stafa2026momba, title = {Network Modernization Improves Multi-Objective Reinforcement Learning}, author = {Štafa, Adam and Heiskanen, Santeri and Novotný, Petr and Pajarinen, Joni}, booktitle = {Reinforcement Learning Conference}, year = {2026}, url = {https://openreview.net/forum?id=EK6T83MVsr}, }